No one has the time or inclination to read (let alone understand) lengthy online privacy policies.

That’s the general consensus when it comes to one of the most important activities we ever undertake – visiting a website.

And while the costs of trying to read and understand a website’s privacy policy have been carefully itemized, it seems the costs of not reading a privacy policy are, well, not well understood.

No one should be disinclined or unable to commit, control, protect and set boundaries for their own life. And no one (businesses, government, or consumers) should have to face unmitigated risk as a result of privacy loss. As has been proposed before, maintaining personal data privacy online takes some work by all concerned.

Luckily for us, there’s now an app for that. If we can’t bring our own time, energy or smarts to the task, we can now bring AI to bear, using a new artificial intelligence tool called Polisis.

It reads and analyzes online privacy policies for us.

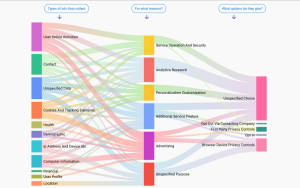

Once loaded, the browser extension plug-in displays its analysis on-screen and presents information about the types of data a website says it collects, what that site says it will do with the data it collects, and what if anything a user can do about it.

A new privacy protection tool uses artificial intelligence to analyze privacy policies, giving information about the types of data a website collects, what that site does with the data, and what if anything a user can do about it. Google’s privacy policy, as summarized by Polisis, is shown in this screenshot. Image credit: Polisis.

The on-screen displays look like multicoloured flow charts, illustrating what happens to data shared with a website like an online map giving road directions. Pop-up text box overlays give additional details about a particular online privacy policy, activity or end result.

Developers and researchers at École polytechnique fédérale de Lausanne in Switzerland, along with project partners at the Universities of Wisconsin and Michigan, have now released Polisis (and a companion chatbot tool called PriBot).

At the core of Polisis is the careful analysis of some 130,000 privacy policies using unique neural-network classifiers and fine-grained semantic analysis, as project team leader Hamza Harkous describes. AI was also brought to bear for the analysis of more than one hundred privacy policies which had been reviewed and annotated by law students in order to bring out even finer details about how a website operator treats and respects a user’s privacy.

The software tool is easily downloaded to a PC; users can have a particular privacy policy analyzed simply by entering in the URL (there are thousands of website addresses already loaded into the service from which users can pick, or add their own).

The addresses entered into the analysis tool by its users, who now number around 17,500, as Harkous reported in correspondence with WhatsYourTech.ca.

Polisis project team leader Hamza Harkous: ‘People are not well-empowered to take action’

“On average, since launching the new apps a few weeks ago, we’re getting one new user on the site every two minutes. Users spent 2 minutes and 30 seconds on average for each visit. We had 1,261 Chrome extensions downloads and around 1,200 Firefox extension downloads. Users have added 7,000 additional (new) websites to our tool. Overall, we have received a lot of positive feedback about the accuracy of the tools.”

Those numbers may not sound that impressive, but given that the tool comes from an academic institution with no marketing budget, a huge take-up was not expected, Harkous admits. “Although they are not huge numbers from an industry perspective, by academic measures they are quite large. We believe they might be the beginning of a greater move towards using AI to assist users in privacy decisions.”

Harkous was not ready to say the initial response showed a lack of interest or concern about privacy overall.

“I would say that people are generally caring about privacy. But they are not well-empowered to take actions via usable tools.” The keyword here is “usable” because bad tools or poorly written policy analysis can make people feel the solution is more burdensome than helpful.

Interestingly, another team at EPFL has developed an algorithm-based solution to determine in real-time the amount of information revealed by clicking to rate a potential online purchase, for example.

“Yes, I’m aware of the work (on) Click Advisor,” Harkous said. “It’s a nice contribution on the theoretical foundations of privacy leakage. It definitely shares the goal of assisting user decision-making online.

“In my opinion, it would be great to extend that work with further usability studies that ask questions like: how much fine-grained controls would users be interested in having? Does that have to be per-click?

“That is what we are trying to start from in our work: what tools would users practically need to enable them for better online decisions?”

Pop-up text box overlays give additional details about a particular online privacy policy, activity or end result.

Sounds like a big toolkit!

The privacy policies and procedures we use now have failed to protect us. Complex privacy policies, ever-changing privacy settings and a whack of damaging privacy violations testify to that. The emergence of portable smart devices and more connected gadgets on the Internet of Things (IoT) only make the problem worse.

With all the potential (and actual) threats to online privacy, could there really be one tool or one suite of tools to ensure protection?

“That is a very important point indeed,” Harkous responded. “Theoretically, yes there could be a single product that does all of that. Practically, it’s more of a business/implementation issue. There is simply no company/organization that does all of these together.”

We as users are left to search for multiple tools, each with a different interface, to achieve our privacy goals – or we give them up and click anyway.

As Harkous sees it, only technically experienced, privacy-oriented users take all the steps necessary. But he remains hopeful: “[W]ith the emergence of privacy as a business opportunity, there could be tools that combine all the tasks together.”

And the intelligence to use them properly.

-30-