Battlefield technologies and advanced AI (artificial intelligence) systems that can make autonomous military decisions in the field are being tested in Canada.

Sophisticated surveillance systems, radar that can see through walls, motion sensors with remote reporting capabilities, portable electronic monitoring gear and more were all used recently by Canadian soldiers to analyze and assess potential threats on a mock urban battlefield – the streets of Montreal.

During CUE 18, defence scientists and military personnel worked together to test over 50 technologies from five participating nations. PHOTO: CPLC JULIE TURCOTTE, 34e Groupe-brigade du Canada LQ2018-1018D.

In all, 50 different technologies or systems were tested in a series of military experiments called CUEs, or Contested Urban Environments tests. Artificial intelligence technology developed in the U.K. was used to help military planners figure out how to best help soldiers detect enemies, hidden threats or suspicious activity in large urban environments.

The tests are part of overall tech trending towards the greater use of machine-based or technology-enabled decision-making in certain disciplines (not just military, but financial, medical, operational, even legal arenas) where human decision-making has been the norm. However, critics of the increasing use of artificial intelligence systems say unintended and unanticipated consequences can result, such as “unnecessary or unacceptable harm” caused by autonomous weapons systems.

Known as the Contested Urban Environment experiment 2018, or CUE 18, the project involved testing artificial intelligence enabled military technologies.

This particular military AI trial was the latest Contested Urban Environment experiment involving soldiers from the U.K., U.S., Australia, Canada and New Zealand (collectively known as Five Eyes in military surveillance circles). Three weeks of testing in September involved more than 150 government researchers and scientists, as well as nearly 100 Canadian Armed Forces personnel, according to government statements.

Most of the battlefield testing was done during the day, although some experiments were conducted at night. Military personnel participating in the CUE were unarmed, according to reports.

The new AI system (known as SAPIENT, or Sensors for Asset Protection using Integrated Electronic Network Technology) was developed in a multi-million dollar four-year program, according to the Defence Science and Technology Laboratory (DSTL) in the U.K.

SAPIENT uses sensor arrays, automation and artificial intelligence to collect, collate and deliver crucial battlefield information to soldiers on the ground as and when they need it. Such information can include what’s deemed to be unusual, suspicious or threatening activity (just what constitutes suspicious or unusual activity in a big city was not specified).

System descriptions do indicate that individual sensors are programmed to make low-level decisions on their own, such as which direction to have a camera look or whether to zoom in, as part of other higher-level objectives. There’s a decision-making module which controls the overall system and makes some of the decisions normally made by human operators.

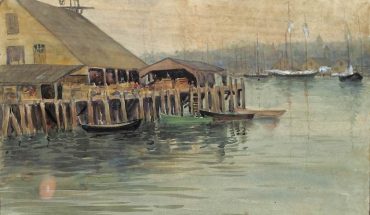

AI battlefield technology was tested in Contested Urban Environment experiments in Montreal (Picture: Ministry of Defence).

Some of the sensors that feed the AI system were carried by the soldiers, others were placed in fixed position on the ground. Other sensors, though, were high above ground, carried by drones or airplanes, transmitting AI-refined information to human operators in the field.

Autonomous sensor modules are designed to provide accurate object detection and classification over a large area in all weather conditions. Some sensors provide shape, tracking and description of objects in motion, providing an alert when the object crosses a boundary or passes through a prohibited area. Thermal imaging and other detectors are used to track location, trajectory and behaviours of objects or people in motion.

Officials say the system can help reduce human error, such as may occur during intense conflict situations, and also free up soldiers who are assigned to monitor existing surveillance technology. Instead of sitting and watching a bank of TV monitors connected to CCTV cameras, for example, those soldiers can be freed up for other military activity.

U.K. Defence Minister Stuart Andrew said of the trial deployment technology: “This British system can act as autonomous eyes in the urban battlefield. This technology can scan streets for enemy movements so troops can be ready for combat with quicker, more reliable information on attackers hiding around the corner. Investing millions in advanced technology like this will give us the edge in future battles.”

Scanning the urban battlefield, deciding what and how to monitor or surveil, the systems provide advance warning and alerts to soldiers (in this case, those soldiers were taking part in an experiment; in other cases, they will be engaged in urban warfare).

Soldiers test SAPIENT technology. The data gathered by SAPIENT can be used by the system to make autonomous decisions, independent of human operators. (Picture: Ministry of Defence).

Key to the success of the trial was determining what armed forces need to know about a city in order to operate effectively there. Montreal, with its dense downtown core of high-rise buildings and urban canyons in between, its uneven terrain punctuated by Mount Royal, even its historic Old Port district, was seen as as ideal place to test technologies that must work well in “the types of conditions in which future armed forces will have to operate”.

Patrick Maupin, the director of the Montreal CUE experiment, said the urban context was important in testing the new technologies: “The most interesting thing about CUE is that it brings together technologies that would otherwise only be tested in labs or in separate countries.”

Other technologies tested on the streets of Canada’s largest city included laser range finders that determine the distance of objects, infrared goggles and thermal optic devices, and a kind of armoured suit or exoskeleton worn by soldiers as much for protection as added strength and resilience.

However, critics of the increasing use of artificial intelligence systems (specifically case military AI, but as mentioned, there are also financial, medical, operational, even legal arenas impacted by artificial intelligence) say undesirable risks can result, such as the “unintended, unnecessary, or unacceptable” ones caused by weapons systems.

Avoiding and preventing those risks is the mission of a non-profit organization called Article 36. Richard Moyes, managing director of the group, has long spoken out against autonomous weaponry and other arms developments, and he and the group he heads have regularly warn against the greater use of machine decision-making.

Be it in Montreal or elsewhere.

This medieval fortress-looking building, an old industrial grain storage facility known as Silo 5, is a favourite locale for urbex activities in Montreal. It was also the location for some serious battlefield technology testing. Located in the city’s Old Port district, the building and surrounding area was one location where soldiers tried out optical sensing systems, vehicle barriers and perimeter security systems, while researchers used various unmanned aerial and ground-based vehicles to scan and analyze the area. Heritage Montreal image.

The Contested Urban Environment experiment is in its second year. The first experiment was held in Australia in November 2017, and the third one will be held in the United States in 2019. The final one slated for the U.K. is in the planning stages for 2020. Technology tested during this time could be available to military personnel by 2025.

-30-