Can you spot the difference between a harmless passer-by and a potential criminal?

Don’t worry — apparently HP RoboCop can.

That’s the name given to a high-tech autonomous machine that has become an official member of the Huntington Park Police Department. Yes, the southern California police force has a robot on the beat, and it’s been patrolling parks in and around the L.A. suburb, acting as an extra pair of eyes where or when human police cannot patrol.

High-definition eyes with a 360-degree field of view are just part of the tech package mounted in this 400 lb. mobile device.

Those eyes, by the way, are high-definition of course, and they have a 360-degree field of view (they’re mounted on top of a 400 lb. mobile device with a rotating head).

HP RoboCop is such a unique piece of technology, it even has its own Twitter page.

But RoboCop is not a trendy one-off: the worldwide market for law enforcement robots will pass $5 billion USD in the next couple of years, according to estimates from Wintergreen Research in its recent report, Law Enforcement Robots: Market Shares, Strategy, and Forecasts, 2016 to 2022.

Toronto’s marine police squad uses an underwater robot with a colour video camera and a manipulating arm that can be used to retrieve objects from the lake bottom.

HP RoboCop is a K5 model security robot manufactured by Knightscope, Inc., a Silicon Valley tech company. Similar products currently on the market include unmanned ground vehicles from Northrop Grumman, smaller ‘pack-bots’ from Endeavor Robotics (now part of a tech conglomerate called FLIR, Endeavor’s predecessor has sold at least 20 military robots to Canada’s Armed Forces), intelligent military and tactical robots from QinetiQ, and other autonomous mobile machines from companies like Nimbo and SMP that can analyze human activities and surrounding environments in real time.

(The Toronto Police Service can sometimes be circumspect with descriptions of how its uses technology, but it does talk about its use of robotics in terms of the tethered underwater robot used by its Marine Unit: officers on board a vessel or on land can remotely control the ‘wet-bot’ — it can reach depths of about 150 metres — and monitor data signals sent back and forth from the robot to a computer. It’s equipped with sonar, a colour video camera and a manipulating arm that can be used to retrieve objects from the lake bottom. The camera can be used to survey the hull of a boat for damages or foreign objects that may be attached for criminal activities such as narcotics.)

Sights, sounds and data gathered by the RoboCop are monitored in the Knightscope Security Operations Center (“KSOC”).

The land-based HP RoboCop is called a “fully autonomous security data machine” that’s loaded with sensors, capable of detecting and recognizing faces, reading and checking license plates, even tracking smartphones. Without the need for remote control, the machine brings a physical presence to its patrol areas, but the sights, sounds and data it gathers are accessible remotely, through Wi-Fi and other communication channels. It’s all presented in a browser-like interface, the Knightscope Security Operations Center (“KSOC”).

Available in Canada, the programmable Nimbo security robot is built on technology from several leading companies: its mobility platform is powered by Segway Robotics, and backed by Intel and Xiaomi. It too has a whack of data sensors and image processors on board, including Intel’s RealSense and Salient’s CompleteView VMS. 4G Wi-Fi, two-way audio and other communications tools are available, and the robot can transform into a Ride Mode that carries a security staffer at speeds up to 11 mph.

SMP security robots and autonomous vehicle control systems come with AI-powered thermal and panoramic surveillance capabilities.

Security robots from SMP (known as the S5 series, they too have representation in Canada) are equipped with high-definition cameras and access to cloud-based recognition software that can recognize people up to 100 metres away. It has long-range loudspeakers, autonomous vehicle control systems and AI-powered thermal and panoramic surveillance capabilities. Images are transmitted via 4G or Wi-Fi.

Of course, the robotic additions to municipal police force are not cheap: investigations into and reports about the Huntington Park scenario indicate that the force there is paying $8,000 a month for the robot, or some $96,000 yearly. The average police patrol officer’s salary in California in 2019 is $62,081.

But costs are not the only obstacle faced in the greater use of machine-based or technology-enabled decision-making robots in various disciplines where human decision-making has been the norm. Critics of the increasing use of autonomous systems say unintended and unanticipated consequences can result, such as the “unnecessary or unacceptable harm” caused by weapons systems driven by machine learning and AI programs that can have biases baked into them.

A jogger on a cool morning, or a potential criminal on the run? Autonomous machines like the Nimbo need to know the difference.

How we design, regulate or even prohibit some uses of police robots requires a regulatory agenda now to address foreseeable problems of the future, says Elizabeth E. Joh, a Professor of Law at the University of California’s Davis School of Law. In her comprehensive report called Policing Police Robots, she makes clear the stakes we’re facing: “We can expect that at least some robots used by the police in the future will be artificially intelligent machines capable of using legitimate coercive force against human beings.”

Because automated machines of many types are programmed to adjust their behaviour according to what they have learned, Joh points out that “robots with artificial intelligence will behave in ways that are not necessarily predictable to their human creators. We will have to address how much coercive force police robots should possess, and to what degree they should be permitted to operate independently.”

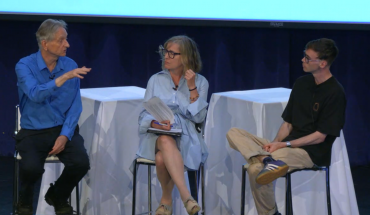

Reflecting the urgency of these issues, they were among the topics of discussion at World Legal Summit Toronto, an international gathering of lawyers, advisors, investors and business people.The one-day event in August was followed by an online session this month, and a final report is due out in October to address three main topics: Identity and Personal Governance; Autonomous Machines; and Cyber Security and Personal Data.

Because automated machines of different types are programmed to adjust their behaviour according to what they have learned, they may not behave in ways that are predictable.

-30-