Controlling hate speech online is just another form of unwarranted censorship, a kind of government mission creep. Not controlling hate speech online is another form of condoned violence, racism, and democratic dysfunction.

If you have an opinion (and you are bound to have at least one) about social media, or the quality of content that’s found there, or the degree to which such content should be regulated – or not – by legislation, the government wants to know.

The Canadian federal government has proposed a new digital safety commission with the power to regulate online content. The actual legislation to create the new agency is expected in the fall; in the meantime, a proposed outline of the duties and responsibilities and capabilities of a new Digital Safety Commissioner of Canada has been released, and the government is holding a public consultation about the need for and implementation of laws to combat harmful online content, including criminal content and content of national security concern.

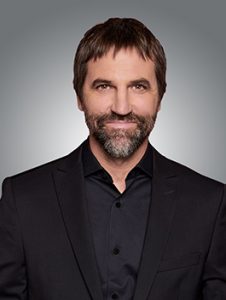

Heritage Minister Steven Guilbeault has tabled proposed a new digital safety commission in Canada, one that would be charged with tackling the problem of hate speech and other harmful content on social media platforms.

The government says it plans to hold roundtable discussions with stakeholders and receive input from Canadians on the proposed framework. Comments will be accepted up until September 25. Canadians can submit comments by email now to pch.icn-dci.pch@canada.ca.

Canada is one of several countries around the world looking at new ways – new laws – to address online speech, good or bad, hateful or not. The digital safety proposals are among several initiatives by the current federal government to reconfigure various laws impacting media in Canada, including streaming media services, Internet privacy regulations, and broadcast content.

“The Government of Canada is committed to confronting online harms while respecting freedom of expression, privacy protections, and the open exchange of ideas and debate online,” reads a discussion guide that lays out our government’s plans for “a safe, inclusive and open online environment”.

The proposed legislation is part of an overall strategy intended to combat hate speech, terrorist content, and child sexual exploitation on social media platforms including Facebook, Instagram, Pornhub, TikTok, Twitter, and YouTube.

They would be called Online Communication Service Providers, and they would be responsible for monitoring content on their platform, reviewing complaints, and ultimately removing hateful posts in one of five categories: hate speech, child sexual exploitation, non-consensual sharing of intimate images, incitement to violence and terrorist content.

Fines for non-compliance could reach up to $25 million dollars, based on a percentage of the enterprise’s total revenue – and that’s global revenue, not just domestic.

The proposals include a mechanism whereby a newly appointed Digital Safety Commissioner could ask the Federal Court to order an Internet Service Provider (like Rogers or Bell) to block all access to a social media platform, if it was found to persistently ignore or refuse content removal requirements concerning offensive content.

The five categories of harmful online content described in the digital content proposal pick up on those already cited in Canada’s Criminal Code and they would be amended to match any changes to the Criminal Code, such as outlined in Bill C-6.

The Office of the Privacy Commissioner of Canada is looking into the pornography site called Pornhub and allegations it is sharing content without consent, something that could fall under the auspices of a new Canadian digital safety commission. Privacy Commissioner Daniel Therrien is also commenting on another new law, Bill C-11, and its attempts to protect Canadians’ privacy online and in the context of facial recognition system usage. Photo of the Privacy Commissioner of Canada Daniel Therrien by Dave Chan.

That legislation defines “hatred” as “the emotion that involves detestation or vilification and that is stronger than dislike or disdain” and that is “motivated by bias, prejudice or hate based on race, national or ethnic origin, language, colour, religion, sex, age, mental or physical disability, sexual orientation, gender identity or expression, or any other similar factor.”

The current definition does not include content that “discredits, humiliates, hurts or offends.”

Whether offensive content is called online hate, cyber hate, or something else, it is widely encountered and seemingly growing in its reach: recent surveys in Canada indicate that online hate crimes account for 11 per cent of overall hate crimes.

Muslims encountered the most online hate at 17 per cent, followed by hate based on sexual orientation at 15 per cent. Fourteen per cent of the cyber hate crime reported was aimed at the Jewish population, and 10 per cent was targeted at black Canadians.

Uttering threats, at 35 per cent, was the most common type of reported cyber hate crime, followed by public incitement of hatred (18 per cent) and criminal harassment at 15 per cent.

Along with a new Digital Safety Commissioner, the proposals call for an appeals or challenge process, managed by a Digital Recourse Council, and an Advisory Board.

Canadians can submit comments by email to pch.icn-dci.pch@canada.ca.

The Canadian federal government has proposed a new digital safety commission with the power to regulate online content. Illustration: Wikimedia Commons