Let’s face the facts: new privacy and security tools to block the ever-increasing use of facial recognition are needed.

Luckily, some University of Toronto tech grads say they have the solution.

Facial recognition is bringing new ways to collect demographic information about each and every one of us by linking visual imagery with existing datasets of shopping habits, personal preferences, stated opinions and more. From the airport to the zoo, on city streets and urban squares, with stops at shopping malls and sports arenas, facial recognition and detection tools are watching. Social, ethical and technical concerns about big data and privacy issues are one result.

As a social phenomenon, selfies are fodder for much discussion and debate. As a computer assets, they are fuel for developments in facial detection recognition technology.

We know the face can reveal lots of basic information, like gender and age (perhaps only approximately). But social media managers, demographers, government agents and other folks can learn a lot more about us from our photos: important clues about emotions; cultural background or ethnicity; even sexual orientation can be derived through image analysis. The fact that much of that data is gathered, shared and analyzed without the user’s knowledge or consent, often through third-party partnerships, only complicates matters. In fact, some Facebook users in the U.S. have filed a class action lawsuit, saying the social media network used facial recognition on photos without permission.

Of course, technical data about the image (type of camera or smartphone used to take the shot; the time of day and geo-location where the shot was taken) are gathered along with the subject matter.

So when you upload a photo of yourself or your friends to a search engine or social media platform, artificial intelligence (AI) and machine learning (ML) systems have more data points to work with – more information to learn from – and they become more effective in detecting, identifying and creating a narrative for the human faces they encounter.

(That’s the idea at any rate; it is early going still, and some facial recognition systems are making some serious mistakes.)

While researching the issues and developments around facial recognition, a former University of Toronto graduate student created a simple, effective way for people to protect their online identity by applying photo filters that reduce the chance of online facial detection.

It’s called FaceShield, and in many ways it turns the table on face recognition technology by using many of the same capabilities in reverse: rather than detect faces, it filters or obscures them in such a way that face recognition systems can’t or don’t work.

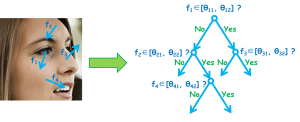

FaceShield’s proprietary algorithm generates “noise” on the photo, applying the visual interference to those areas of a photo that face detectors most naturally recognize, such as a person’s eyes, nose or jaw.

Facial recognition tools make use of highly detailed algorithms and comprehensive datasets to identify the human face. Image: Shengcai Liao, National Laboratory of Pattern Recognition, Institute of Automation, Chinese Academy of Sciences.

The tactic is to fool face detection technology (while still having a photo that’s worth looking at), but the strategy, as one of FaceShield’s founders describes, is to reduce threats from, and to enhance protection for, personal privacy and security.

(One might reasonably ask how uploading a photo to any website leads to privacy these days; in this specific case, the website has no obvious privacy policy nor any stated commitments as to the handling of uploaded images. In response to the question, FaceShield stated in an email that “we do not store any uploaded photos on the site and DO NOT collect any user data”.)

“While the general population is becoming more aware of online privacy and security issues, the vast majority is not aware that their facial data is being mined to create digital profiles for a variety of uses by third parties, which is a huge personal privacy violation,” Avishek ‘Joey’ Bose, founder and president of FaceShield, described in a release. “We discovered that we could misguide a face detector into failure through advanced algorithms and technology that we’ve turned into a simple service for everyone to protect their identity online. The reality is people want to steal your data – and you need to protect it if you don’t want it to land in the wrong hands.”

Reached later via email, Bose elaborated on the risks to our data; photographic or otherwise: “First, a malicious site could indeed mine user data from photos from browsing patterns on their website to create a digital profile. In this case, the fear is even greater as one modality of the data is someone’s actual face which has a variety of digital indicators —i.e. age, gender, ethnicity. Second, even if a site doesn’t use someone’s digital data for any malicious purpose they might sell their data to another entity which may, in fact, do whatever they want with it. This is a fairly common business model and one such that Facebook and other social media sites use.”

His final point could be applied across the ‘Net: “[T]he more important ethical question is that people should always be in control of the digital data they produce and at the moment they are powerless to do so.”

(As a master’s candidate at U of T, Bose worked in the Faculty of Applied Science & Engineering, where he and professor Parham Aarabi have long been developing tools for facial recognition and detection blocking. Bose went on to pursue his PhD student at McGill, where he’s creating other AI tools and solutions.)

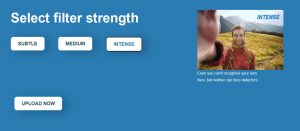

Right now, FaceShield is available as an online demo (accessible by desktop and mobile browser), where you can upload an image and see how one of three different levels of shielding looks.

There’s the Subtle option, which uses a discreet filter so the image looks as good as it can (and as identical as possible to the original) while still, according to FaceShield, breaking certain face detectors.

FaceShield is available as an online demo (accessible by desktop and mobile browser), where you can upload an image and see how one of three different levels of shielding looks.

The Medium and Intense filter options increase the changes seen in the image, breaking many more face detection systems, but running the risk of making any face in the image unrecognizable to humans.

Aarabi and Bose tested their FaceShield system on an industry-recognized collection of faces (used by many facial recognition systems developers) called the 300-W face dataset; in order to best train AI-based face recognition capabilities, it includes more than 600 faces with a wide range of characteristics, including gender, ethnicity, physical backgrounds, lighting conditions and more.

FaceShield is said to have reduced the percentage of detectable faces to less than one per cent (from the datasets’ 100 per cent ID rating).

Plans call for a stand-alone downloadable app to be available, and the company is receiving funding from M2 Ventures for further development of its facial detection blocker.

# # #

The latest in a series of calls for immediate government regulation and ethical industry responsibility regarding developments in facial recognition came to my attention shortly after this story was posted.

It’s from Brad Smith, president and chief legal officer at Microsoft.

His latest blog: https://blogs.microsoft.com/on-the-issues/2018/12/06/facial-recognition-its-time-for-action/

-30-

Related: Read other articles on Privacy

So I’m sitting with a bunch of friends and workmates at our festive holiday get-together, and at one point people start pulling out their smartphones to pass around, sharing and showing some photos of each other, in sometimes, well, let’s say silly situations.

I start making some noise about Facebook, and criminal organizations, and facial recognition, and third party trackers. Not great topics to bring up over the holidays, I find, and the photo sharing continues. At various points, people turn to the smartphone owner / photo master to say, ‘Can I show this photo?’

Now, that’s among friends! Sitting around a dinner table! Seems there is still some concern over who sees what…so how to explain Facebook?

At about the same time, the company in question posts a note:

(see blogpost at https://developers.facebook.com/blog/post/2018/12/14/notifying-our-developer-ecosystem-about-a-photo-api-bug/)

Seems that, yet again, its own security and data protection policies have failed, and that millions of photos may have been shared with the wrong person. The wrong third-party, actually. Through a so-called buggy API, access to photos that people wanted to share on Facebook and even to photos they didn’t want to share, was open and unprotected.

Hmmm….think Mark will join us at our next dinner for some….shared conversation?